A comprehensive Robotic Process Automation and Data Management ecosystem architected and built from scratch - acting as a central integration hub seamlessly bridging modern web portals, closed legacy desktop applications, local databases, and cloud services (Google Workspace).

Eliminates thousands of hours of manual labor by fully automating mass report downloading, unstructured PDF data extraction, spreadsheet synchronization, and dynamic document generation. Scales to 1.3M+ records without UI freezing.

ATLAS - Fully Autonomous Desktop AI Agent

A fully autonomous AI agent running entirely on your local machine via Ollama. No cloud, no subscriptions, no data leaving your PC. ATLAS plans tasks in plain language, breaks them into steps, picks the right tool for each step, executes everything, verifies the result, and self-heals on errors with automatic retries. Runs from a single Python file, supports Qwen, LLaMA, Mistral, Gemma and any Ollama model.

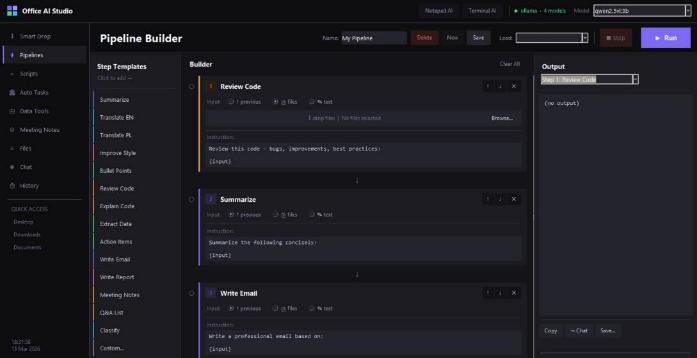

Office AI Studio

Open-source, 100% local AI automation suite for office work. Smart Drop Zone for drag-and-drop actions, Pipeline Builder for multi-step workflows, Python script automation, Auto Tasks triggered by file rules, CSV/data tools, and Meeting Notes → tasks + email drafts. Works with Llama, Mistral, Bielik and any Ollama model.

GroqAgent - Natural Language Computer Control

Control your Windows 11 computer with natural language. Combines Groq's LLMs with a full tool suite: browser automation (click, fill forms, JS execution), file operations, Excel creation with charts, CMD/PowerShell, and web fetching. Features dual-model routing - auto-selects between fast (Llama 3.1-8b) and smart (Llama 3.3-70b) based on task complexity.

Agentflow - Autonomous AI Market Research Agency

A fully functional multi-agent system built with CrewAI and Google Gemini 2.5 Flash. Agents have live web access via DuckDuckGo search and collaborate in a hierarchical process: Manager → Internet Researcher → Market Analyst → Report Director. Outputs clean, professional Markdown business reports. 100% free to run using Gemini's free tier.

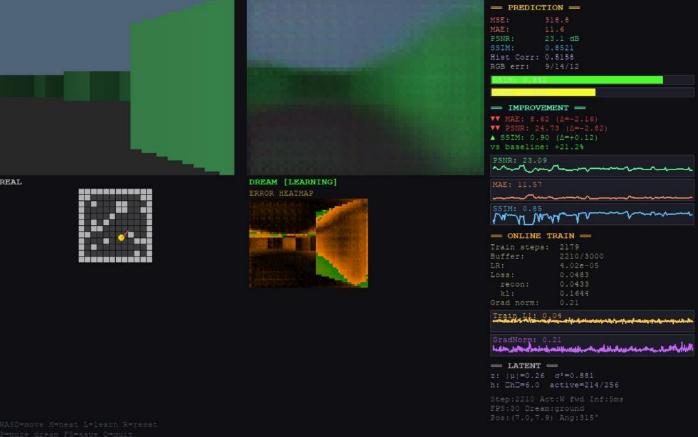

Mini Dreamer - Interactive World Model with Real-Time Online Learning

A minimal but complete world model system that learns to predict future observations while you explore a procedurally generated 3D environment in real time. Every step becomes training data fed into a replay buffer; a mini-batch gradient update fires every step. Features CNN Encoder → 64-dim VAE latent, GRU-based RSSM (256-dim) for temporal dynamics, and a "Pure Dream Mode" where the model generates predictions from its own previous outputs - letting you watch errors compound and representation quality degrade.

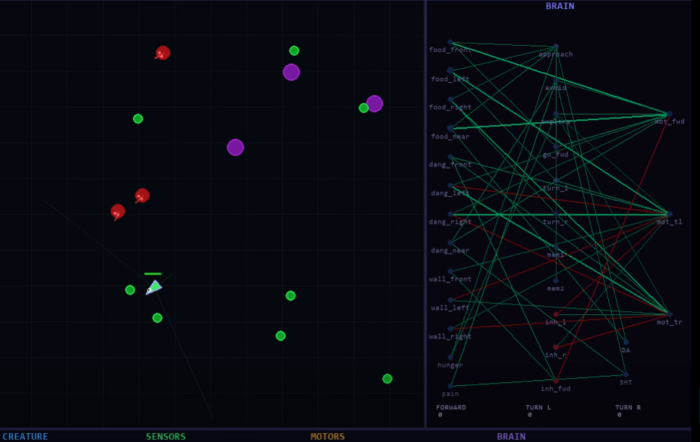

Bio-Brain - Biologically Faithful Artificial Brain

A spiking neural network with real Izhikevich neuron dynamics running a virtual creature that learns to survive. 29 spiking neurons, STDP learning (from Bi & Poo 1998 rat hippocampus research), dopamine/serotonin reward system (three-factor learning rule), and Dale's law enforced (excitatory/inhibitory segregation). No backpropagation - purely local spike timing and neuromodulation. One Python file.

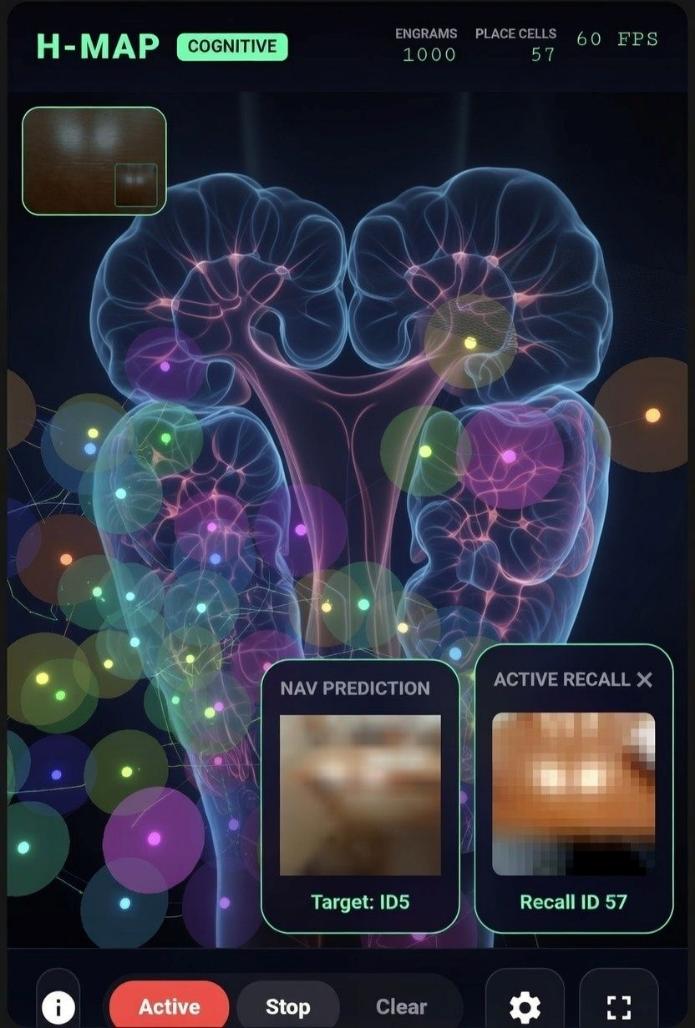

H-Map - Cognitive Memory System Inspired by the Hippocampus

An experimental cognitive app that builds a dynamic memory map from live camera input using 400-dim vector embeddings (digital engrams). Visually similar frames cluster into artificial Place Cells, forming a navigable cognitive map. Includes a predictive layer that anticipates upcoming visual states and a reinforcement layer (reward/punishment) that shapes system behavior. Real-time, self-organizing - no neural network classifier.

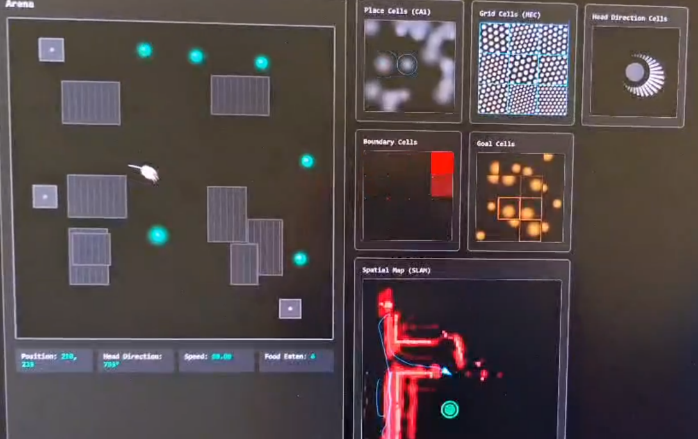

Biologically Inspired Mouse Navigation Simulator

Biologically inspired navigation agent using place cells, grid cells, head-direction cells, and SLAM-like memory. The agent explores an arena, avoids obstacles, builds a spatial map, and finds food autonomously - all driven by the same cell types found in mammalian hippocampus.

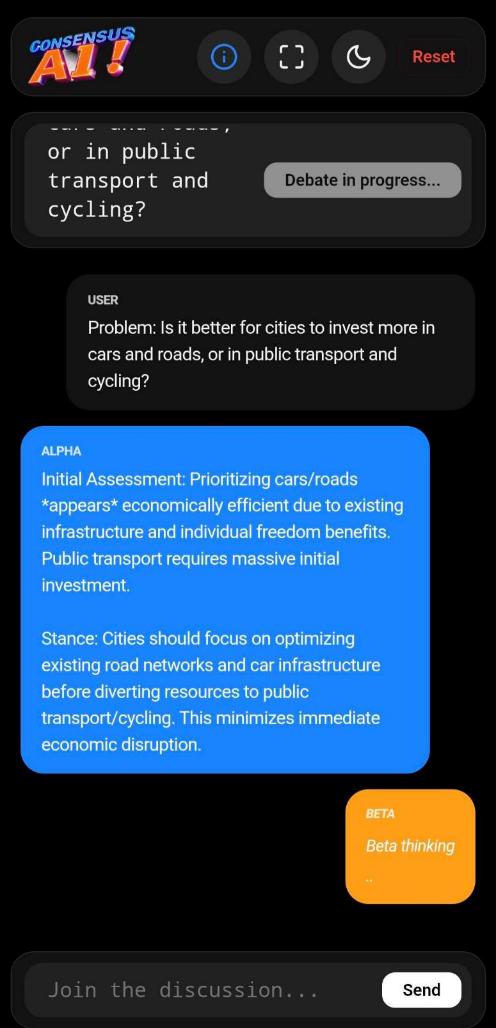

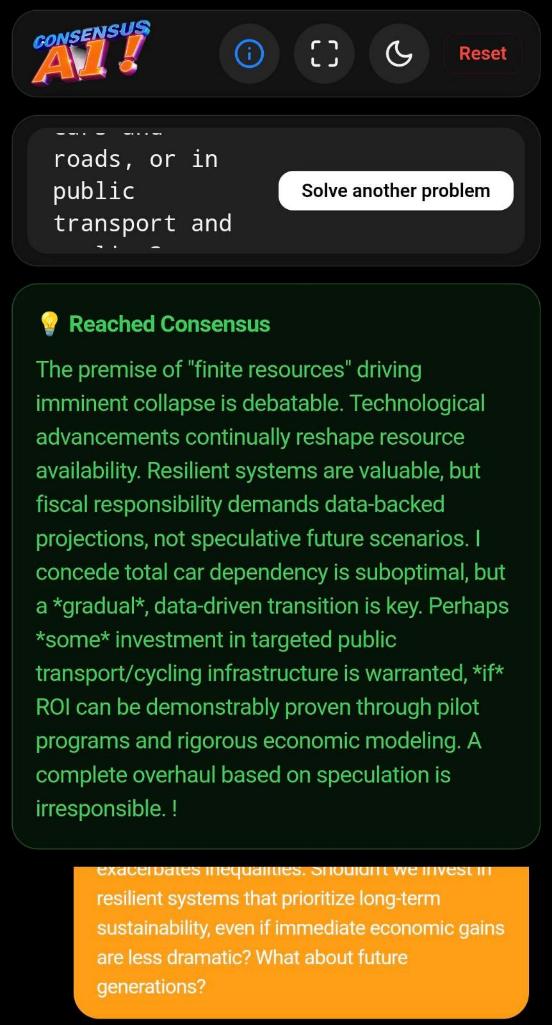

Consensus AI - Dual-Agent Deliberative Reasoning

Instead of instant answers, two AI personas start from opposite viewpoints on your problem, debate, challenge each other's arguments, refine positions, and converge toward common ground. Useful for difficult decisions, complex tradeoffs, and exploring multiple perspectives before reaching a conclusion.

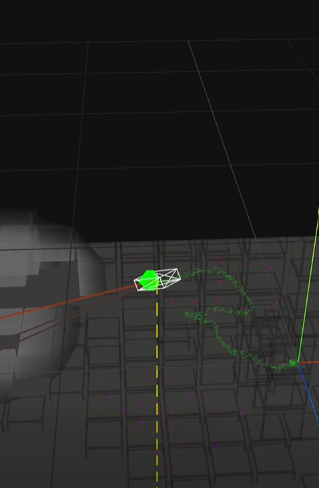

Collision Field AR - Real-Time Obstacle Detection

AR app that perceives surroundings in real time using a single monocular camera in a mobile browser. Detects walls, floors, furniture, stairs, and drop-offs; builds a local 3D occupancy grid with an attention mechanism that updates only currently observed regions. Provides visual red-edge warnings, haptic feedback, a live top-down mini-map, and records 3D camera trajectories. No LiDAR, no cloud, no app install.

EcoSound AI - Ecosystem Health Analysis Through Sound

Assess ecosystem condition from a .wav file or live microphone. Computes scientific acoustic indices (ACI, ADI, BIO, NDSI), scores ecosystem health 0–100, generates a dashboard with spectrogram/waveform/charts, optionally identifies bird species via BirdNET (Cornell Lab), and compares multiple recording locations side by side. Pure Python, runs locally.

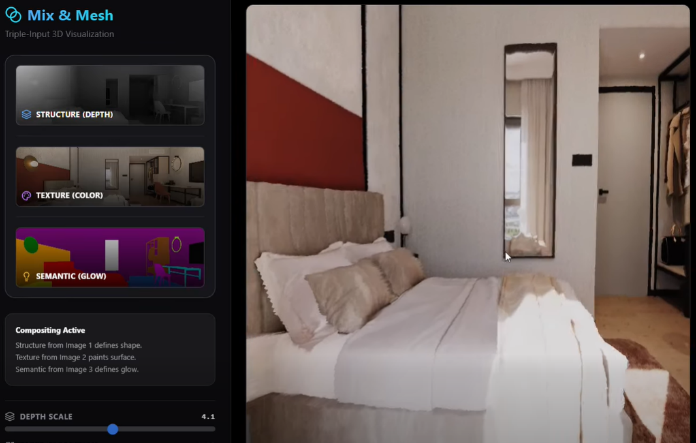

Mix & Mesh - Single Photo to Interactive 3D Scene

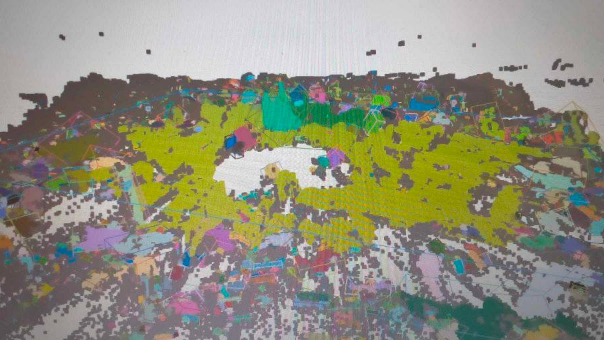

Upload one photo (room, landscape, portrait, object) and the AI instantly generates a depth map, creates semantic segmentation masks (recognizing objects), and fuses them into an interactive 3D scene. Rotate the view, look from different angles, apply effects, or export to 3D format.

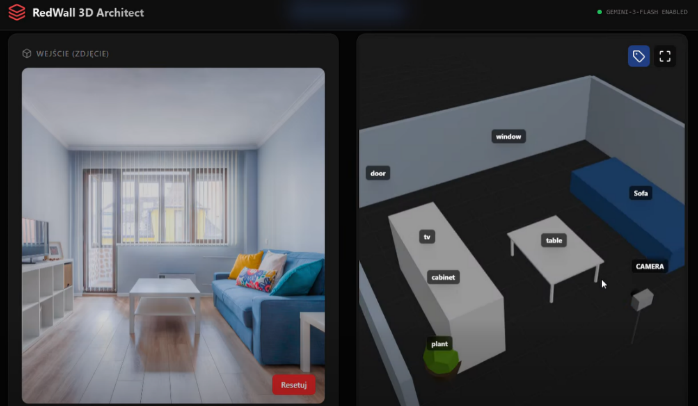

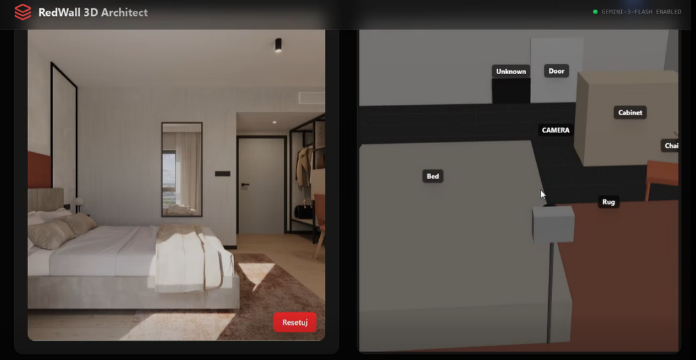

RedWall 3D Architect - Room Photo to 3D Floor Plan

Upload any room photo (even low-quality phone shots) and AI reconstructs the 3D structure, estimates camera position, and recognizes furniture with approximate dimensions. Navigate the scene in 3D, switch between realistic and semantic views. Powered by Gemini 3 Flash. Useful for interior designers and real estate agents.

Obstacle Map - Real-World GPS Traversability Map

A real-world GPS map that models traversable space, not just routes. Features live GPS tracking, 3D building extrusions from OpenStreetMap, and a collision/occupancy heatmap. Built for spatial cognition research, robotics, AR, and accessibility applications.

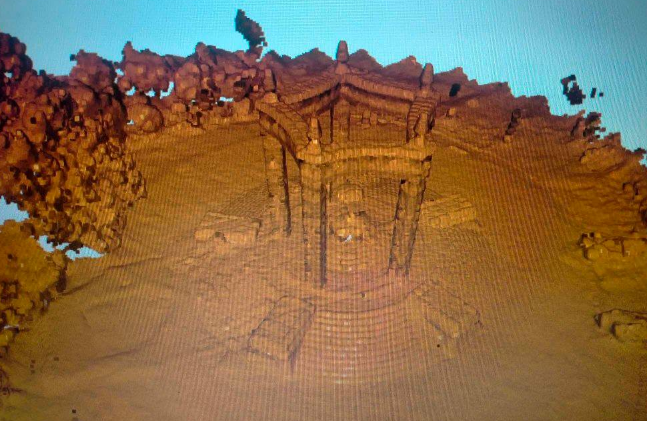

Drone Cloud - Aerial AI Spatial Scanning

Using a drone and AI, create spatial scans of real environments analyzable for distances, spatial orientation, thematic segmentation of objects/sizes, and occupancy grids using Python. Demonstrates depth colorization, semantic segmentation overlays, and 3D point cloud generation from drone footage.

Robot SLAM Simulator 3D

Interactive SLAM demonstration where clicking any object in a 3D room causes a robot to automatically plan a path, avoid obstacles, and update its environmental map in real time. Shows SLAM algorithms in practice - from robot localization to A* path planning.

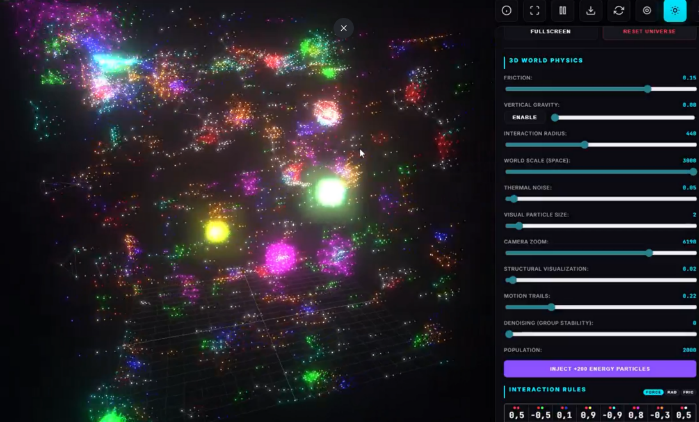

Bio-Evo Sim - Interactive Bio-Evolutionary Particle Simulation

Particles governed by basic interaction rules (attraction/repulsion by type) spontaneously form complex self-organizing structures resembling living organisms - from simple clusters to ecosystems with predation, symbiosis, and reproduction. Adjustable parameters (forces, velocities, friction, particle types) enable exploring infinite "worlds" and discovering new digital life forms.

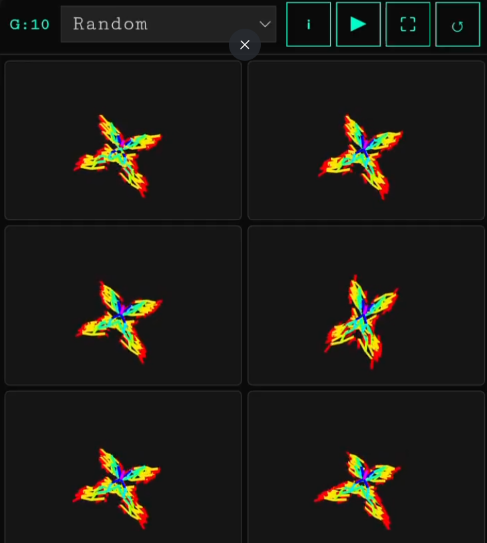

3D Biomorphs - Dawkins' Blind Watchmaker in 3D

A recreation of Dawkins' classic Biomorphs from The Blind Watchmaker, now with behavior mutations in 3D. Simple genetic parameters evolve shapes and movements illustrating how cumulative selection produces complexity without foresight. Also includes the original 2D Biomorphs version as described by Dawkins.

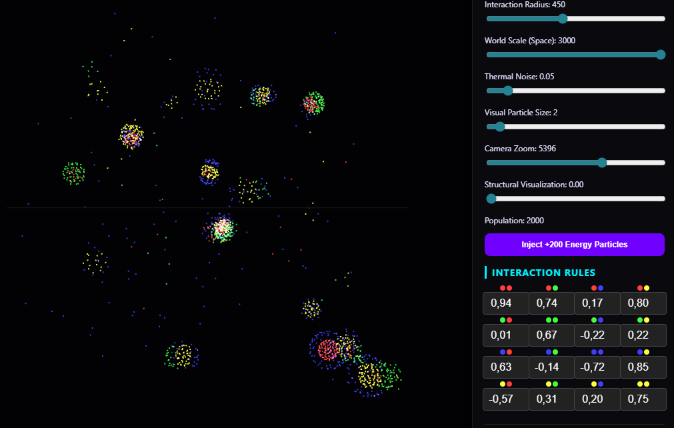

Evolution 3D & PROTOS - Emergent Life Simulations

Two particle-life simulations: Evolution 3D - 3D particles with interaction rule matrices that spontaneously form molecule- and orbital-like patterns; press * for new random rules. PROTOS - 2D emergent life where simple particle interactions produce creature-like behavior. No scripts, no agents - pure bottom-up emergence.

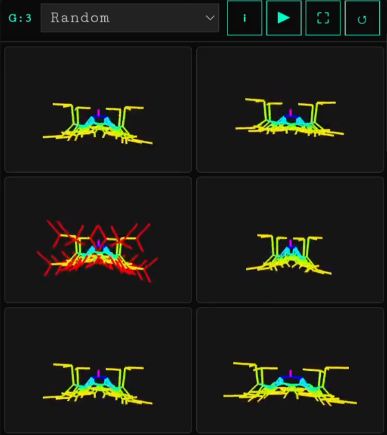

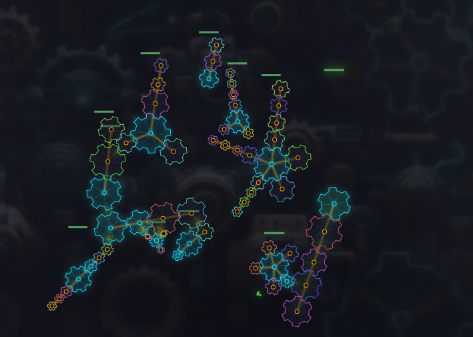

MechEvo - Mechanical Creatures That Dock & Evolve

Mechanical creatures with physical joints and sensors that dock onto each other, form increasingly complex mega-structures, and evolve over generations. Emergent locomotion strategies arise from physical constraints and selection pressure.

Virtual People Simulation - AI-Driven Social World

Observe the interactions, decisions, and lives of AI-driven people in a virtual world. Each character makes autonomous decisions about where to go and what to do, interacts with locations and other characters, and adapts to random weather events. Thought bubbles reveal their real-time activities, emotions, and social connections.

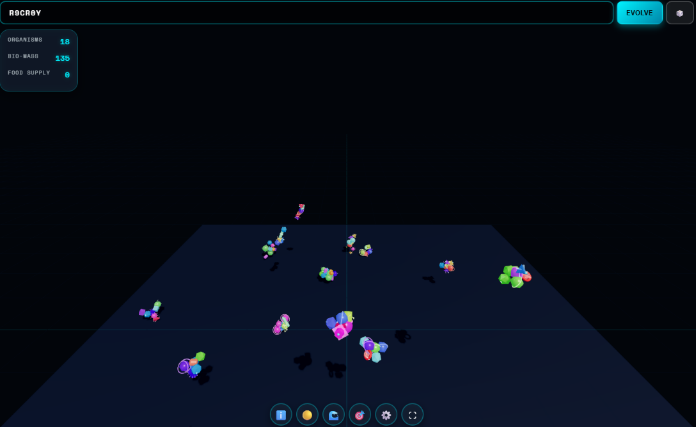

Text-to-Creature - Your Text Becomes Life

Each letter in your text is DNA: vowels = muscles, consonants = bones, numbers = sensors. Different text sequences create different 3D organisms that crawl, pulse, and move with realistic physics simulation. Type any string and watch it become a living creature.

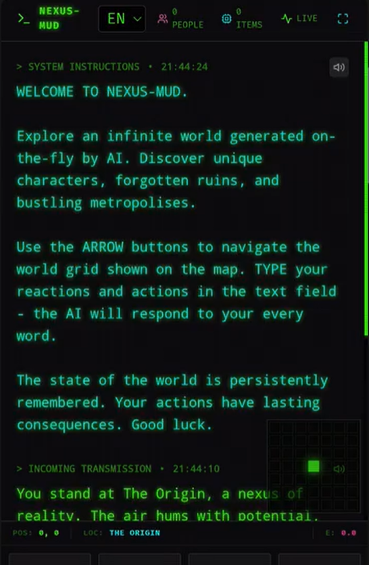

Nexus-MUD - Infinite AI-Generated Text World

Each play-through generates a new universe - unique, unpredictable, governed by its own logic. Explore a vast chessboard-like map; every step generates a new location, landscape, or story in real time via AI. Every location, character, and event is permanently stored in world memory. The further you travel from the starting point, the stranger the world becomes - from classic fantasy to surreal abstract realities.

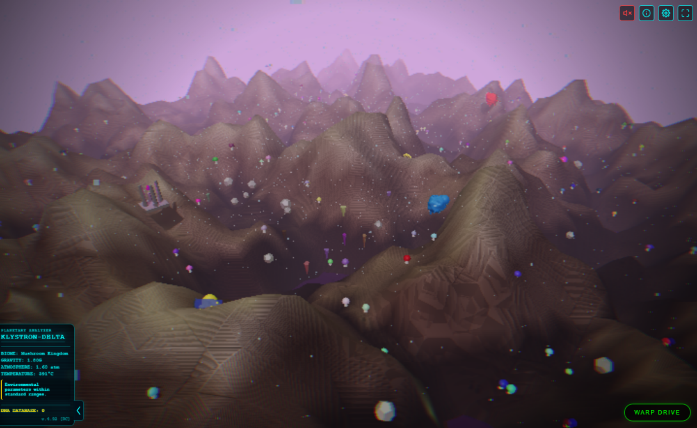

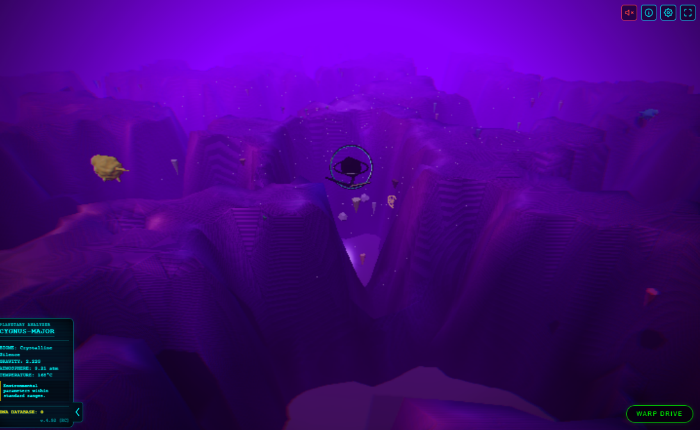

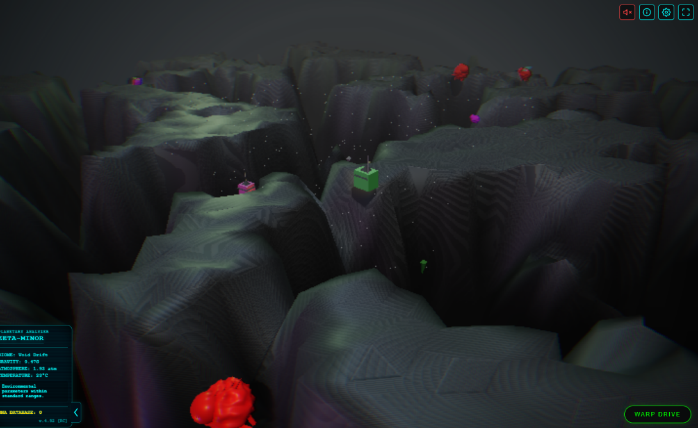

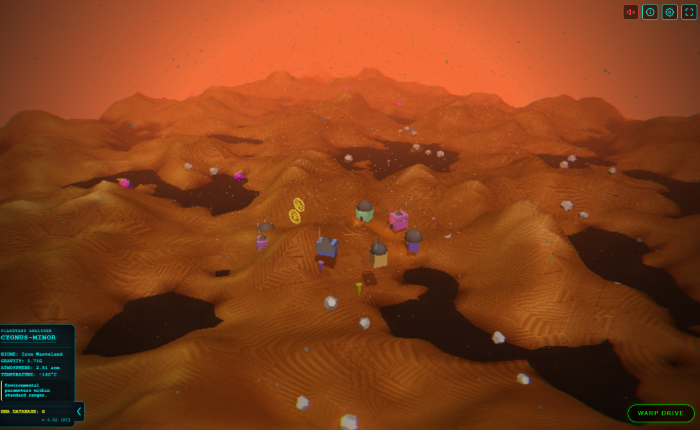

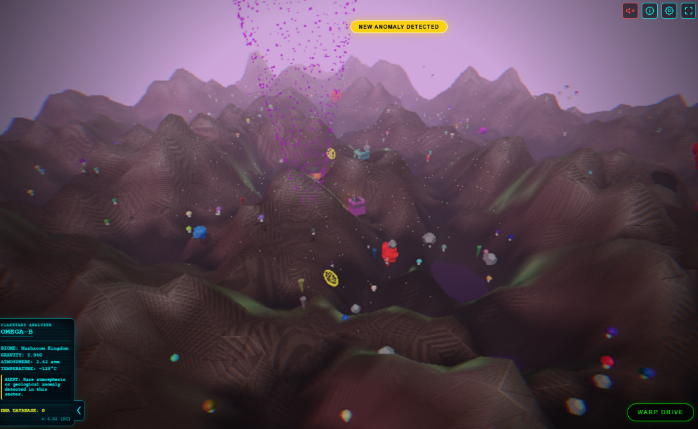

Exoplanet Explorer - Procedural Alien World Discovery

Explore a procedurally generated universe of exoplanets filled with strange lifeforms and unexplained phenomena. Discover unique ecosystems, encounter rare anomalies, and capture extraordinary creatures - each with detailed characteristics. Scan, collect, and build your own cosmic archive as you travel across distant star systems.

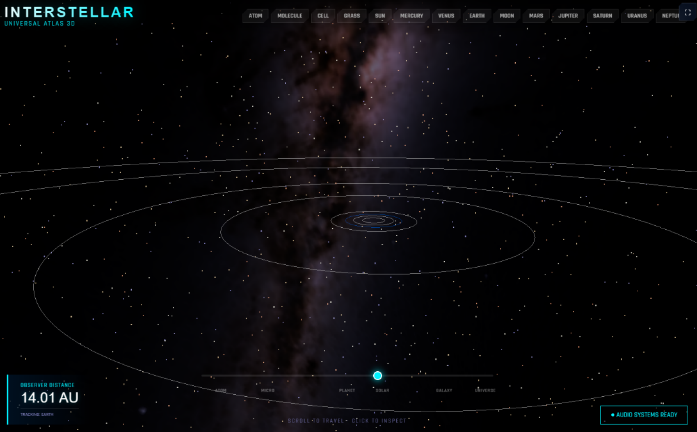

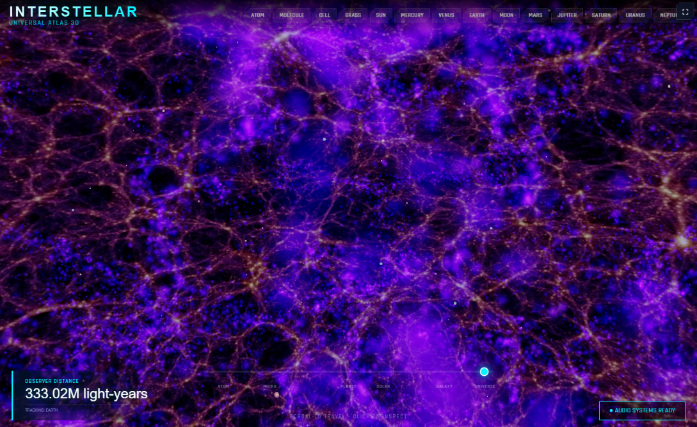

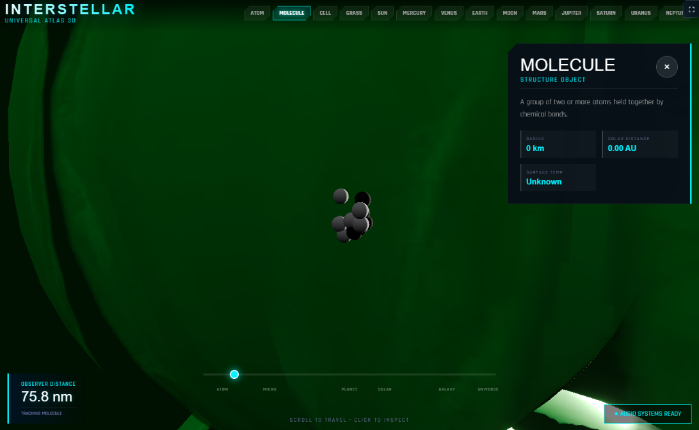

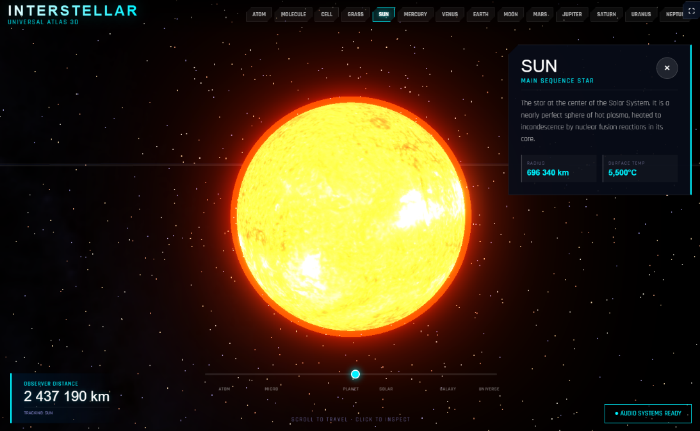

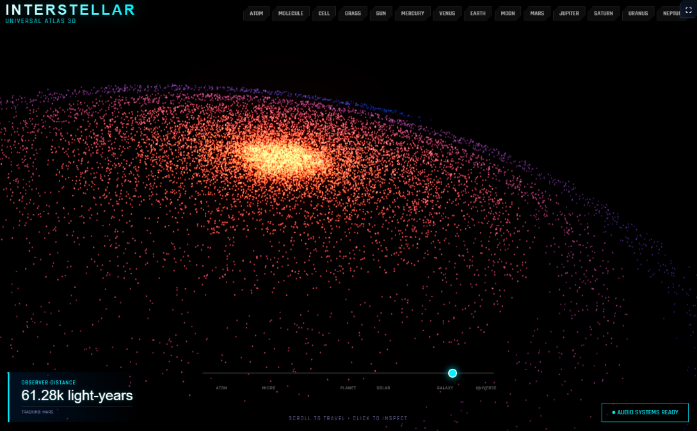

Interstellar - Universal Atlas 3D

Interactive 3D atlas of the universe with seamless scale transitions - from atomic and molecular structures through cells, grass, planets (Mercury to Neptune), star systems, the Milky Way, and large-scale cosmic structure. Spatial exploration with a unified model of the cosmos, real-time audio systems, and clickable object info panels.

Shake It! Cataclysm - Physics Destruction Simulator

Choose your force - earthquake, tornado, or meteor - and watch the city face it in real time. Shake your smartphone to trigger earthquakes. Dynamic physics, collapsing structures, shockwaves, and debris make every scenario unpredictable. City Integrity meter tracks total destruction progress.

Flight Simulator - Fly Anywhere on Earth

A realistic browser-based flight simulator with real-time dynamic weather, a seamless day-night cycle, and hundreds of detailed airports from major hubs to mountain strips and island runways. Complete freedom to choose route, aircraft, and conditions.

DJI Drone Simulator - Realistic Browser Drone Flight

Fly a DJI drone in a large realistic 3D world with buildings, trees, and terrain. Physically accurate flight model, realistic FPV and third-person camera modes, Unreal Engine–level visuals - entirely in the browser. No installation.

AI Kitchen Sim - Webcam-Controlled VR Kitchen

Step into a realistic kitchen in first-person view without controllers or VR headsets. Advanced head and hand tracking via a standard webcam lets you look around, control virtual hands, and physically interact with objects - grab pots, open cabinets, stir ingredients - with realistic physics.

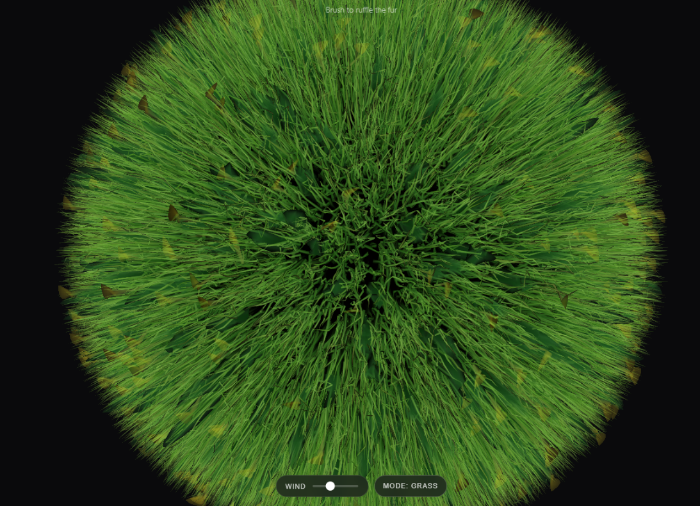

Fur Ball - Tactile Relaxation Experience

A calming, tactile browser experience. Gently pull, brush, and stroke a soft furry sphere rendered with real-time strand simulation. Multiple modes including grass and magma. Designed as a simple, soothing interaction for stress relief and focus.

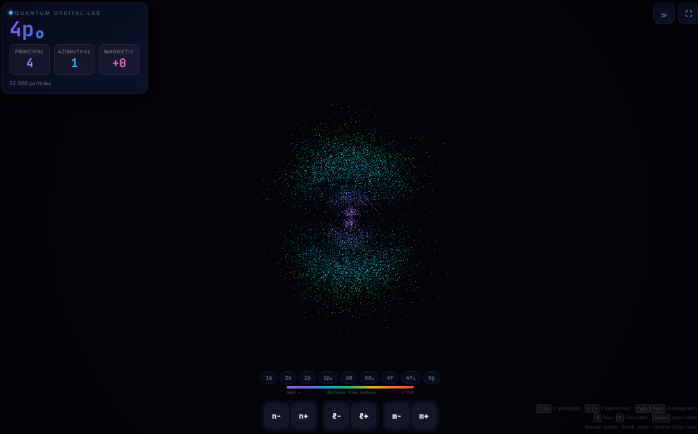

Quantum Orbitals 3D - Real Wave Function Visualization

Interactive 3D visualization of atomic orbitals showing electron probability distributions around the nucleus. Each particle's position is calculated using real quantum wave functions from Schrödinger's equation. Rotate and explore different orbital shapes by adjusting quantum numbers (n, ℓ, m).

edu-transformer - GPT Architecture in One Python Script

A free, hands-on GPT course in a single Python script. Same architecture as GPT-2/3/4, just 8K parameters instead of 1.8T. Run it instead of watching 4-hour lectures: build/train/generate text in 3 seconds, debug mode showing every tensor and attention weight, compare tiny/small/medium models side-by-side, run ablation studies, take quizzes, and use an interactive playground. No GPU needed.

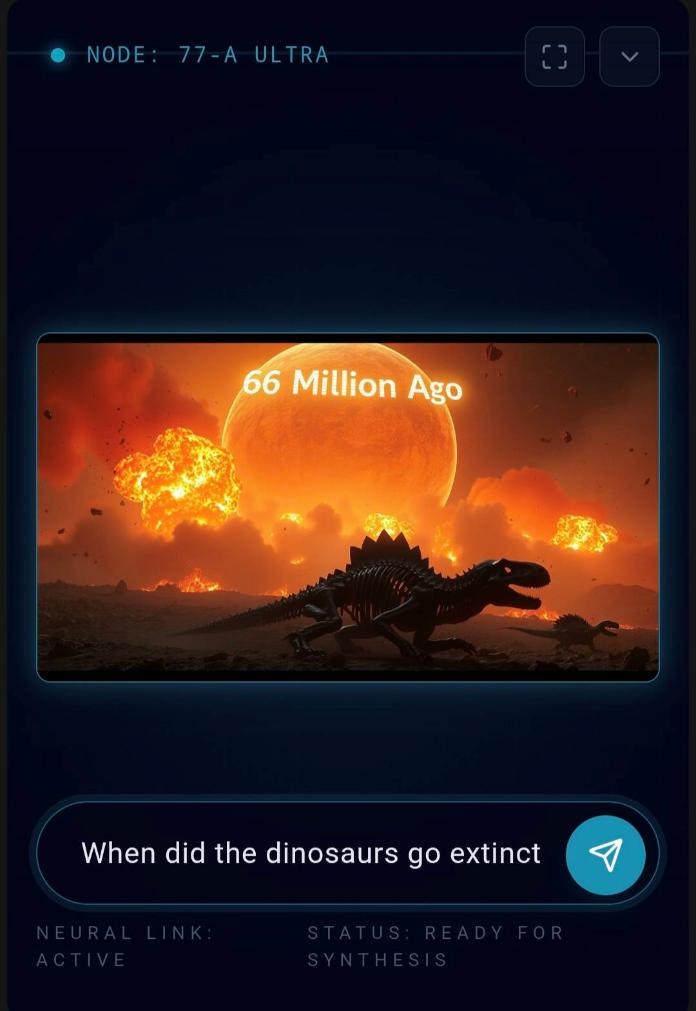

OneLook - Visual Answer Engine

A new kind of AI experience where answers are delivered not as text but as a single visual. Enter any question and instead of reading paragraphs, you instantly see an AI-generated image representing the idea behind your query. Fast, intuitive, entirely visual - turns questions into pictures.

Text input using eye gaze - no keyboard, no mouse. AI predicts next words and phrases; select them just by looking at the screen. Designed for users with limited mobility.

Write with your finger directly on the screen - AI instantly recognizes letters and sentences in real time and converts them to clean text. No keyboard, no stylus.

Real-time text-to-speech communication aid for people who cannot speak. Type a message and it is instantly spoken aloud - fast, simple, barrier-free.

Screen-free haptic navigation for visually impaired users. Silent when on route - increasing vibrations when deviating. No screen needed, works entirely through touch feedback.

Load any image - background is removed and the character is animated. FaceMorph mirrors your real-time facial movements onto any photo. Record and download in seconds.

Control a virtual robotic arm by moving your hand in front of the camera. Works on both laptop and smartphone rear camera. A teleoperation prototype built entirely in the browser.

Build a 3D model of your surroundings through your phone camera - tap to place points and walls, walk around to view from different angles, export as GLB. No ARCore or SLAM required.

Real-time depth map generation from your camera feed. Visualizes scene geometry live - useful for computer vision experiments and spatial understanding demos.

Uses your phone camera and flashlight to detect obstacles in real time. Measures brightness differences between frames to estimate relative distance - no depth sensor needed.

Play a piano using only your hands in front of the camera - no touch required. Two versions with different interaction modes. A creative gesture-control music experiment.

A prototype for controlling a desktop interface using hand movements and camera - no mouse, no keyboard. Pinch gesture performs a click. A proof-of-concept for gesture-driven OS control.

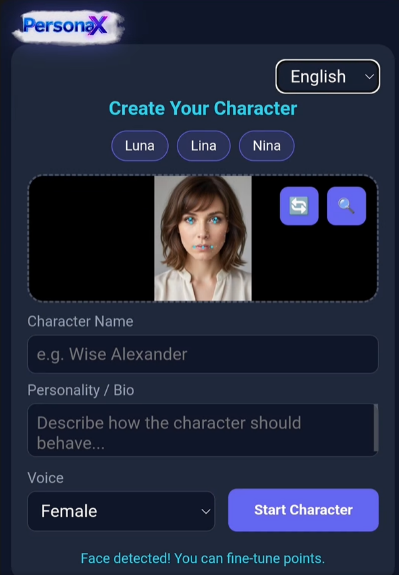

PersonaX - Customizable Real-Time Virtual Persona

Talk in real time with a virtual persona. Upload a face, set a personality, tweak the voice, and chat live. Supports 3 languages, fully customizable characters including face detection fine-tuning and voice selection.

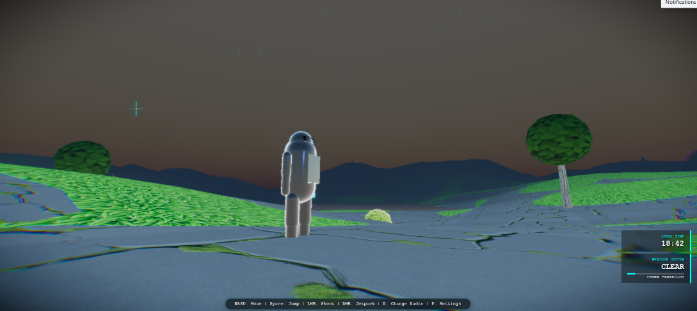

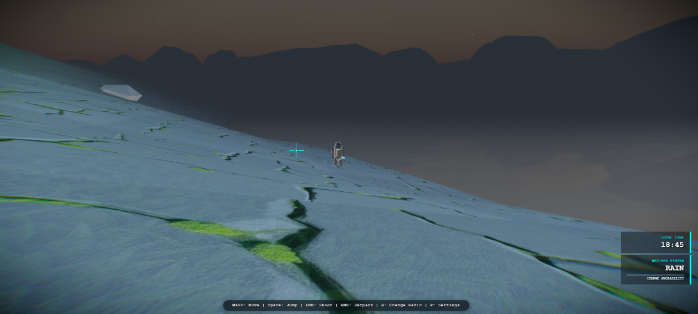

Advanced 3D Web Environment - Unreal-Level Browser World

A full Unreal Engine–style interactive 3D world running entirely in the browser. Swim, fire lasers, watch procedurally animated animals, and explore dynamic weather systems - all in real time, no installation.

AI Channel

AI Channel